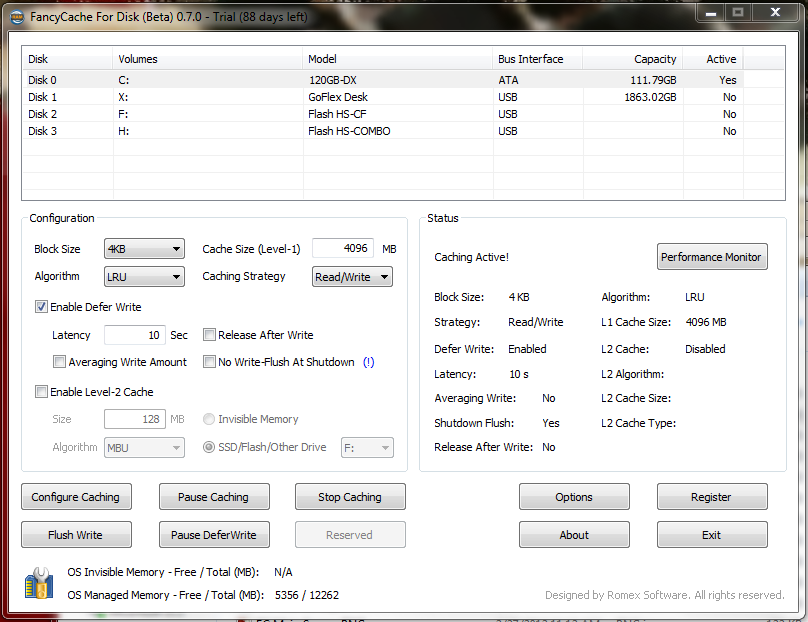

Clicking on C: will let us modify settings, so let’s select 4K blocks, set the cache size to 4096MB, enable deferred writes, then select Start Caching. That’s it. You’re now caching.

You can verify the active settings with the Status area. It verifies the settings are the ones chosen and that the cache is active.

You can verify the active settings with the Status area. It verifies the settings are the ones chosen and that the cache is active.

Now, we can look at the performance monitor by clicking the button in the status window:

Right after starting the cache, the hit rate is practically 0%. As the time and workload increases, the right data starts to get cached. Here is the performance monitor during an intense, protracted workload:

Right after starting the cache, the hit rate is practically 0%. As the time and workload increases, the right data starts to get cached. Here is the performance monitor during an intense, protracted workload:

A little time and data can do wonders. The hit rate during a benchmarking session is almost 100%. This means virtually every request is being serviced out of the cache at light speed.

A little time and data can do wonders. The hit rate during a benchmarking session is almost 100%. This means virtually every request is being serviced out of the cache at light speed.

BENCHMARKS

The particular SSD in this test, a 120GB Mushkin Chronos Deluxe, is being used as the system drive. It’s had weeks to settle in, and performance has become quite average. So before looking at benches with caching enabled, let’s get a baseline. Although this run with Anvil’s Storage Utilities is with almost incompressible data, performance is still well below where it is fresh out of box. It’s clearly in a used, steady state. So can a little caching help improve the performance?

Although this run with Anvil’s Storage Utilities is with almost incompressible data, performance is still well below where it is fresh out of box. It’s clearly in a used, steady state. So can a little caching help improve the performance?

That would be a most definite yes! The above numbers are just plain silly, showing off what 1333MHz DD3 CAS 9 can do when accelerating a modern SSD, even one suffering the effects of performance degradation. But something else is going on here — those face-melting 511,167 4K random read IOPS? They’re being CPU limited. That is, the number one performance limitation in this particular circumstance is the CPU. Even a small increase in CPU performance could greatly increase the result here.

That would be a most definite yes! The above numbers are just plain silly, showing off what 1333MHz DD3 CAS 9 can do when accelerating a modern SSD, even one suffering the effects of performance degradation. But something else is going on here — those face-melting 511,167 4K random read IOPS? They’re being CPU limited. That is, the number one performance limitation in this particular circumstance is the CPU. Even a small increase in CPU performance could greatly increase the result here.

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

This article talks about making a hybrid drive as an option. FancyCache requires an “L1” (Memory) amount minimum of 128 MB, there are no “L2” only caches (which would be an SSD). Also, the SSD cache is not persistent either – there is no mechanism in place to recover the data from a power failure or blue screen. Hopefully this hybrid drive option will be added later.

Additionally, there are not only data loss issues but data corruption issues when using block based lazy writes. FancyCache’s main competition has had many issues of drives slowly becoming more and more corrupt over time. FancyCache calls out specific scenarios when you should use their product – in general if the windows read caching solution is insufficient for your program. The write caching doesn’t even come into play – only if you have self error checking programs and data (or are dealing with a scenario where data corruption only adds a bit of static) is it an acceptable risk.

Please compare with other similar solutions eg. SuperCache by Superspeed.

Wow, just wow! I have a 3930K that can hit 5.1Ghz, though it takes 1.5v so I typically keep my baby around 4.6Ghz (1.38v, under water btw), and is using 16GB of DDR3-2133 9-11-10-28 (G.Skill Ripjaws Z) running at 2400 10-11-11-30, with primary storage being a Samsung 830 256GB SSD….

I am going to try this out this week, and see if I can’t break 1mil IOPS in both read and write! 50% more cores, 3x as many threads, AND a higher clock speed as well as 2x as many memory channels, faster memory…. I am pretty pumped to see what this can do!

Shouldn’t the operating system do this automatically? I know Linux does, and I’d say Windows should have the same feature.

The main difference (besides the difference in the cache algorithm) is the ability to delay writes to the hard disk for seconds or even longer. I set my write back to 2 minutes on the drive I use to develop software (since all code gets checked into svn there is limited danger). The default cache in windows does not allow you to do that because it is potentially dangerous.