INSIDE THE NYTRO MEGARAID

As much as we have honed our well earned reputation for ripping things apart, LSI warned that disassembly of the Nytro MegaRAID probably wasn’t a good idea and could easily destroy a very expensive product. Subsequently, our tour in and around the Nytro MegaRAID card will be pretty short today.

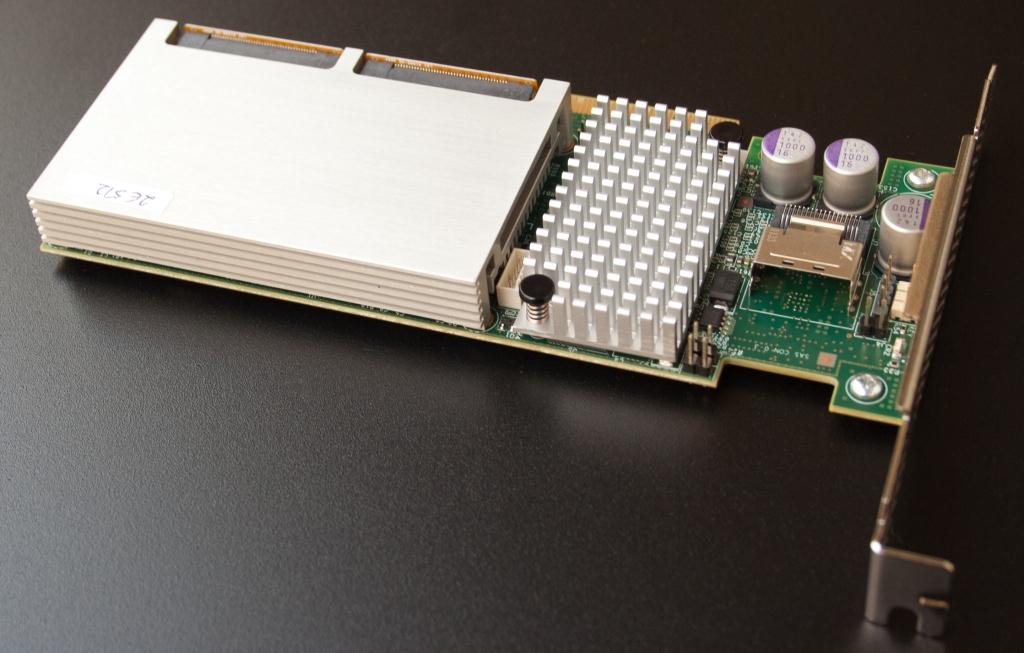

Under the silver bladed heatsink lies the LSI SAS 2208 RoC which powers the device. To the right of that is the SFF-8087 quad port connector which, in this case, will likely be connected to an expander to multiply the number of hard drives. That’s all pretty standard stuff, really. The most important characteristic of the LSI Nytro MegaRAID is that there are two LSI SandForce powered flash modules attached. Much like the Nytro Warp Drive, they reside under cover and inside a housing out of plain view.

Both the Nytro MegaRAID and Nytro Warp Drive cards use the LSI SandForce SF-2582 flash storage processor. The units are connected via SATA to the RoC through two of the ports not used for the internal SFF8087 connector. The remaining two out of the eight total ports go unused. The SF-2582 is similar to the consumer-oriented SF-2281 found in a large percentage of today’s consumer SSDs, except the last ‘2’ in the number denotes 8 channels with 16 byte lanes. This makes it easier to make a high performance 512GB eMLC flash module, as SF-2281- based 512GB drives are slow in some respects due to the way the flash is interfaced over the 8 byte lanes. The second digit, the 5, means it’s an enterprise controller, so better SMART data, power-loss-protection circuitry support, and military erase support are on offer as well.

The LSI Nytro MegaRAID uses Toshiba eMLC built on a 32nm design. Running at 133MT/s, SandForce drives using Toggle are typically smoking fast. Though sub-32nm Toggle is faster nowadays, the 32nm eMLC is rated for 30,000PE cycles, instead of the 3,000 found on newer Toggle flash. Each package on the drive is composed of eight dice, with each die being 32gbits (4GB), so we need 16 packages to get to the 512GB capacity in each of the two caching drives.

The two SSDs are 28% over provisioned, not including ~7% spare area, leaving ~370GB per drive. Out of the 1024GB total capacity, there is about 740GB total “user addressable space”. This is a misnomer though; you can’t directly access either SSD.

Prospective purchasers would be well served by picking up a battery backup unit too. The Nytro MegaRAID family uses it’s own cache protection technology, the Nytro MegaRAID SCM01.

On the back, we see 1024MB of Micron ECC 1333MHz DDR3 cache used for the SAS2208.

A WORD ON NYTRO CACHING SOFTWARE

LSI’s Nytro caching software is slick. All of the information related to which logical block addresses are cached (and which aren’t) are handled by the RoC, not a software layer running directly on the OS. This does save some host overhead, but most software caching isn’t necessarily that compute or resource intensive. You basically get complete transparency to the operating system.

In between all but a handful of testing runs, we clear and disassociate the cache drives. Once we do that, subsequent testing starts off the hard disks and Nytro caching software decides which LBAs (logical block addresses) are hot. In order to accomplish that, multiple LBAs first have to be accessed. That being the case, the slower the LBAs are accessed, the more time it takes to learn which LBAs to cache. All the decisions are made by the SAS2208, as it keeps track of which LBAs are needed and how many times they’ve been used, sort of like your iTunes playlists.

If we were to try and fully cache a 400GB range of LBAs by using full span random accesses, it might take a rather long time to fully load in the MLC cache. By contrast, a 20GB test file caches almost instantly; it’s merely a matter of statistics. For HDDs with rather low random performance, it might take hours to fill up the cache with hot LBAs. This isn’t really a problem though; It’s a constantly changing list of addresses to add or evict from the cache, and it’s basically something that would only happen once in a blue moon in normal deployments. Looking at the first half hour or so of logged data in the performance charts, you’ll note that once the caching starts kicking in, the performance jumps nearly immediately. Until that point, there isn’t much going on. So the slower the underlying drives, the larger the capacity of the virtual disk, the longer the caching effect takes to show peak performance.

…In other words, once the performance starts accelerating, performance starts accelerating.

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

Just, WOW !

Another amazing review! Keep up the hard work. I’ve continued to be impressed by the rich content on this site.

Great looking piece from LSI and nice review Chris! What gets me though is the price of the unit. When you consider you can plug a SSD into a 9270 with CacheCade for a considrably cheaper end piece that 1 extra port gained for having onboard nand just doesn’t make fiscal sence.

What would be exciting would be to see the nitro’s flash set to 4 x X Gb units set in R0 nativly, (just like you can already using CacheCade and SSDs without the loss of more ports).

It’s great to see LSI developing their Pcie.3 offering and I look forward to where they take it in the future.