SECTION I: UNDERSTANDING RAID AND RAID LEVELS

If you’re thinking about running a RAID setup, read this section of the guide, as each RAID level has positives and negatives. The guide does not rely on RAID, as you can run a single-drive server; however we will be going with RAID 5. Use the RAID calculator to find your final array size. The proceeding information is via LSI’s comprehensive user guide:

RAID DESCRIPTION

RAID is an array, or group, of multiple independent physical drives that provide high performance and fault tolerance.A RAID drive group improves I/O (input/output) performance and reliability. The RAID drive group appears to the host computer as a single storage unit or as multiple virtual units. I/O is expedited because several drives can be accessed simultaneously.

RAID BENEFITS

RAID drive groups improve data storage reliability and fault tolerance compared to single-drive storage systems. Data loss resulting from a drive failure can be prevented by reconstructing missing data from the remaining drives. RAID has gained popularity because it improves I/O performance and increases storage subsystem reliability.

SUMMARY OF RAID LEVELS

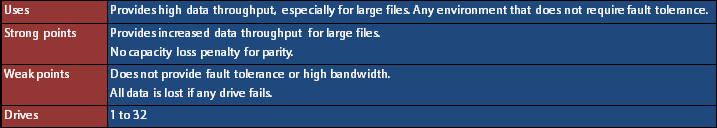

RAID 0

RAID 0 provides disk striping across all drives in the RAID drive group. RAID 0 does not provide any data redundancy, but RAID 0 offers the best performance of any RAID level. RAID 0 breaks up data into smaller segments, and then stripes the data segments across each drive in the drive group. The size of each data segment is determined by the stripe size. RAID 0 offers high bandwidth.

RAID 1

In RAID 1, the RAID controller duplicates all data from one drive to a second drive in the drive group. RAID 1 supports an even number of drives from 2 through 32 in a single span. RAID 1 provides complete data redundancy, but at the cost of doubling the required data storage capacity.

RAID 5

RAID 5 includes disk striping at the block level and parity. Parity is the data’s property of being odd or even, and parity checking is used to detect errors in the data. In RAID 5, the parity information is written to all drives. RAID 5 is best suited for networks that perform a lot of small input/output (I/O) transactions simultaneously.

RAID 5 addresses the bottleneck issue for random I/O operations. Because each drive contains both data and parity, numerous writes can take place concurrently.

RAID 6

RAID 6 is similar to RAID 5 (disk striping and parity), except that instead of one parity block per stripe, there are two. With two independent parity blocks, RAID 6 can survive the loss of any two drives in a virtual drive without losing data. RAID 6 provides a high level of data protection through the use of a second parity block in each stripe. Use RAID 6 for data that requires a very high level of protection from loss.

In the case of a failure of one drive or two drives in a virtual drive, the RAID controller uses the parity blocks to re-create all of the missing information. If two drives in a RAID 6 virtual drive fail, two drive rebuilds are required, one for each drive. These rebuilds do not occur at the same time. The controller rebuilds one failed drive, and then the other failed drive.

RAID 10

RAID 10 is a combination of RAID 0 and RAID 1, and it consists of stripes across mirrored drives. RAID 10 breaks up data into smaller blocks and then mirrors the blocks of data to each RAID 1 drive group. The first RAID 1 drive in each drive group then duplicates its data to the second drive. The size of each block is determined by the stripe size parameter, which is set during the creation of the RAID set. The RAID 1 virtual drives must have the same stripe size.

Spanning is used because one virtual drive is defined across more than one drive group. Virtual drives defined across multiple RAID 1 level drive groups are referred to as RAID level 10, (1+0). Data is striped across drive groups to increase performance by enabling access to multiple drive groups simultaneously.

Each spanned RAID 10 virtual drive can tolerate multiple drive failures, as long as each failure is in a separate drive group. If drive failures occur, less than total drive capacity is available.

Configure RAID 10 by spanning two contiguous RAID 1 virtual drives, up to the maximum number of supported devices for the controller. RAID 10 supports a maximum of 8 spans, with a maximum of 32 drives per span. You must use an even number of drives in each RAID 10 virtual drive in the span.

There are MANY more RAID levels, but these are primarily the conventional ones adequate for our server build.

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

how much did this cost all together?

about 50k all together

The system before the HDDs, LSI card, PSU and case was about 5000. Factor in about 3000 for the sponsored equipment IF you need eight of those hard drives. A stingy builder could probably put this exact system together for under 7000 I would bet

Hey Andy,

The total price is listed in the components and conclusions section, but there is not precise amount.

Since we didn’t prioritize heavy gaming or heavy usage, our components are a tad older. If you were to take the present-day updated equivalent components (for example, a 3770k, GTX 680, and a better motherboard), it comes out to roughly $5000 average; of course, give or take $750 depending on where you live and how your prices are.

If you want the identical price to what we paid for ours during the time we bought them, it would come out to that price. If you can find the exact components we’re using present day, you’re looking at around $3500 total!

Again, just a matter of where you live, what you plan on buying, and how your prices/availability looks like.

Thanks!

Hey Andy,

The total price is listed in the components and conclusions section, but there is not precise amount.

Since we didn’t prioritize heavy gaming or heavy usage, our components are a tad older. If you were to take the present-day updated equivalent components (for example, a 3770k, GTX 680, and a better motherboard), it comes out to roughly $5000 average; of course, give or take $750 depending on where you live and how your prices are.

If you want the identical price to what we paid for ours during the time we bought them, it would come out to that price. If you can find the exact components we’re using present day, you’re looking at around $3500 total!

Again, just a matter of where you live, what you plan on buying, and how your prices/availability looks like.

Sorry for the late reply; my comments kept getting marked as spam.

about 50k

Timely article! I’m just going to start a backup PC build

using mostly parts on the shelf from previous builds and LSI 9265-8i about to

be replaced by a LSI 9271-8iCC and new drives. Question: will a copy of Win 7

Home premium work for the OS? The 2 workstations on our home LAN (wired

gigabyte) are using Win 7-64 Home Premium and Win 7-64 Pro.

Thanks Cal

Hey Cal,

Make sure to read Section IV (page 10) if you plan on using a 3TB+ drive/array as your OS boot drive.

Aside from that, Win 7 Home Premium should work just fine!

Hi again.

The beat-up old case that was a demo on the back shelf at my local NCIX store has room for 15 drives: 8 2T enterprise HDD on a 9265-8i as a RAID-5 /w spare, 5 2T on the MB as a RAID-10 /w spare, a SATA CD/DVD, and either a 1T SATA or a 320G IDA for the OS.

My ASUS MB started crashing after I tried to add RAM so I bought a MSI MB to use the X58 CPU and RAM for the backup build.

Low BIOS RAM: In your write-up you made a quick aside about difficult access to the LSI WebBIOS with the limited BIOS size in the MB that you used. I have a LSI 9211-8i in an Intel D975BX2 with that same issue and I expect the same problem with a 9265 in the MSI X58M as well. I could not find a refference to the solution at LSI, probibly my searching abilities, but I did find a refference at https://tinkertry.com/lsi-knowledgebase-article-points-tinkertry-method-configure-lsi-raid-z68-motherboard/ which pointed to an unavailable LSI KnowledgeBase Article 16602. Also https://tinkertry.com/lsi92658iesxi5/ .

Thanks for the help. Cal

Hey Cal,

I hope I’m reading the right part, but it seems like you’re having trouble getting into WebBIOS.

I feared this may come up. I was hoping that it was an issue with my Gigabyte board, but I can see now that it is not.

The LSI diagnostic check, while helpful, takes its sweet time. However, there are a few things that make it go haywire, and mass rebooting is the best way to fix these problems.

I’ll outline a couple, starting from WebBIOS:

1. CTRL+H not triggering WebBIOS – this can happen for a couple of reasons. One of them is mashing CTRL+H. This is a big no-no, as it’ll just hang if you do that. Press it once, and it’ll load.

The second reason is more subtle, and probably what you’re suffering from. WebBIOS says that it will load into it once the computer posts. Well if it posts, we’ll go into Windows (or drive boot failure if you haven’t installed an OS), not WebBIOS. The trick is to get into your booth menu (usually F12). I’m not sure what the MSI boot menu looks like, but if you’re familiar with it, you should end up with an odd entry. Mine was something alone the lines of “LSI CD ROM”. It could also be something having to do with SCSI or PCI-E RAID. Regardless, the out-of-place entry will be the WebBIOS boot utility.

Now, the server may restart when you pick this, or it may go straight into WebBIOS. If it does restart, let it load normally and hit CTRL+H at the prompt once without mashing anything else. It’ll load right into WebBIOS.

2. Failure to load into options – an exceptionally aggravating problem with the diagnostic check is that sometimes your keyboard will not register the keys you mash to get into certain options. For example, as you mash DELETE to get into your BIOS, or F12 to get into your boot menu, after the CTRL+H WebBIOS prompt the server will either hang, or continue through as if oblivious to your key registration.

If this happens, restart and try again. The CTRL+H prompt stays on screen for about 15 seconds, so while mashing from the BIOS post screen up to the prompt, hit the respective key you’re mashing once, a couple of seconds before the CTRL+H prompt goes away. This will ensure that you will get into whatever option you’re trying to get into.

For some reason the diagnostic has a short and long memory. Sometimes you just need to mash for a few seconds to get into an option; other times you need to do it right from post up to the final seconds before CTRL+H prompt goes away and boots. Super annoying, especially considering how long the diagnostic check goes on for.

Seems my strange workaround on WebBIOS entry on Z68 motherboards still applies (it was always a little goofy to have to do this, but even LSI points to this same workaround)

https://tinkertry.com/webbios/

with SSD Review mention over here:

https://tinkertry.com/lsi-knowledgebase-article-points-tinkertry-method-configure-lsi-raid-z68-motherboard/

Thanks for posting your saga, Deepak!

No problem, and thank you as well for the contribution Paul 🙂

Are guys on weed? Home server and you choose to bypass a key feature for the home or SOHO user, Storage Spaces. You choose to use very expensive drives and hardware RAID and try to aim this at the home user, WTF?

Choosing to stick an OS on such a large array is also just plain over-complicated. You could have used a cheap pair of SATA drives on the on-board SATA ports in RAID-1 or AHCI with dynamic RAID-1 and kept the pool for what is wanted. Now you have a array that will be turning and burning 24/7 as there is an OS on it.

No mention of the networking headaches that arise with Server 2012 and it’s extra bloat that plays havoc with older OS’s or network devices.

Sorry, but you guys missed the mark in so many ways.

Thanks for the response and we can see your view of things. Fortunately, we have received several responses to the contrary as well. From our viewpoint, we wanted to approach things from the most understandable level and such that it was a complete picture that could be followed by others. We hope to have accomplished this.

To answer your question, I recall our initial discussions where we wanted to build a system that all could build, using very conservative parts and/or those that have some bite to them and leave ourselves open to build on the initial report in the future. Can the server be upgraded or could it have been built in a different fashion? Absolutely and I am sure you will agree that we could have thrown an SSD in as well… Watch for things as you have suggested in the future as we build on this first report. With the response we have seen thus far, we believe the interest in this subject is much greater than originally thought.

Thanks again.

Just to follow up on what Les posted, yes we left the guide open for people to choose however they wanted to approach the server. We mentioned that you could go hardware RAID, software/motherboard RAID, or something else, including Storage Spaces. Even the OS can be different. There are even more methods, such as unRAID, but it all depends on what the user wants. If a user is going for Windows 8 or WS 2012, Storage Spaces will be advertised for obvious reasons. It’s not something that needs showcasing, but we did mention it in case readers don’t know about it.

Onboard doesn’t always work, and that was the case with our motherboard. When we chose a RAID 1 array with two of the drives, it built it, but the Windows installer didn’t recognize it. Hence, the installer showed the two drives separately untouched, without any pooling or redundancy. In addition this also forced us to use IDE, and furthermore did not allow us to get into UEFI or use GPT.

We want to provide the technical analysis of what it is like to build an ultimate home server – the keyword there being ultimate. Therefore, we want to address the most complicated methods and be as comprehensive as we can so people can fine-tune their own procedure, and remove/add steps as they see fit.

We also prioritized our build, which was solely on storage. With the money we saved using older parts, the rest of the budget went into high-end parts, because that is what matter to us the most. We made a note that if users want to add anything extra, such as the ability to play games or encode, then they should be willing to spend more for a mid to high-end GPU. The approach to building can be heavily customized, and we tried out best to offer advice for users that want to do something other than what we did. Of course, we couldn’t cover every single possibility, but we hit the typical ones.

We also made a note that it may be beneficial to the user to use an SSD as their main boot drive, provided they are willing to spend the money. It is not a requirement, and it would’ve indeed led to an extra step on making a backup schedule for the SSD directed to the RAID 5 array in case something went awry, a solution we would pick over Storage Spaces.

The way we planned it meant that wasn’t a need, so if the user decided to use the RAID 5 array as their primary boot drive, backing up wouldn’t have to be done at all. Yes it is more complicated but it is worth it in the long haul.

We also mentioned and addressed the networking problems by doing a walk-through We have used WHS 2011, and the same problems are in WS 2012. Once configured, there should be no problems at all. Our server has two other NAS units, two laptops, and ten desktops connected to it without any problems.

Finally on the subject of Storage Spaces and why we chose the array over it – we don’t want to leave the decision to Microsoft. WHS V1 had Drive Extender, and WHS 2011 did not, which meant that users either had to use an old, unsupported operating system, or upgrade and lose that feature. WS 2012 brings it back yes, but there is no telling what the future holds. We don’t want the readers to have to deal with this problem should it ever arise again, and if anything we rather provide them with an easy means to upgrade considering how quick Microsoft has killed off all of their previous server operating systems, with WHS 2011 barely lasting a year before getting the EoL stamp.

Hope this clears up the confusion.

1 Not his fault your broke… 2 Onboard Raid Sucks its “Fake Raid”.

3 raid 1 really? why not raid 4, 5 or 6?

4

Les,very good review…You’re almost in my mind, as atm i am trying to make a parts list for a similar home project (9260-4i though)

Is the system yet available for some benchmarking ?

I mean, since you did a massive build with a very-very helpful & detailed article, it would be nice to measure the performance and maybe time the LAN transfer by the onboard gigabit adapter.

Lets ask Deepak as this review was his baby….stay tuned…

Hey Felix,

Great question. I actually ran four simultaneous copy tests from two different sources to the server, including a 600GB live backup session. Overall speed was pretty darn nice, about 35+ MB/s for each copy session, of course varying due to sizes. A 550GB folder of 2.5k files and 141 folders took about 4 hours to copy over, while the other four copies were going on.

We’re waiting on CacheCade at the moment to post proper benchmark results. We got about 650MB read, but only about 60MB write for random IO testing using CDM, so we’ll see how much CacheCade boost performance.

The LAN side of it is doing much better, and those numbers will rise once we activate CacheCade. My LAN network is entirely on gigabit speeds, and there were absolutely no hiccups while all of this was going on. It was absolutely seamless.

Been planning a project like this – though thinking of running Linux. What do you see are the pros and cons between Linux and Windows? OS price is my main consideration. Support is offered by some companies (e.g. Red Hat) though I haven’t done my research in that area – I’m on Linux Mint and the forums help enough.

I believe overall Linux will suit you better, but in terms of ease I prefer Windows. Support I find is better for Linux. Keep in mind WS 2012 is new, but if WHS 2011 is anything to go by, solutions are most often offered by forums as you said. Tech support, especially on the MS side, is awful to put it nicely.

I can also add that WS 2012, while a lot more polished, is just not as well documented. If you run into a problem, chances are you’re on your own.

If you are comfortable, definitely use Linux. Just remember what you want out of your server. WS 2012 has everything I want, so I went with it. Give Amahi a try and see if you like it. If not, go with WS 2012, or WHS 2011 if you’re worried about cost.

WHS 2011 is EoL but support will last for about 4-5 years. Just remember it has no Storage Pooling feature.

WOW….I cannot believe you are advising people to not have a backup plan because you are using RAID 5… ” so we don’t have to worry about backing-up anything as RAID 5 has redundancy (managed by the LSI 9270-8i, or whatever RAID controller you’re using).” That is by far the worst advise that I have heard in a LONG time. Otherwise a good article.

Hey Brian,

The reason I said that is because the array was used as the main OS drive, and unfortunately WS 2012 doesn’t allow backups on the main drive. At least in my case, it gave me errors whenever I tried setting it, and would always try and look for other drives to backup to.

Aside from that though, if you do have extra hard drives, definitely setup a backup. I made it optional, as WS 2012 has some weird backup options. I had to move my backup folder to a separate drive from the array to actually get the backup process working.

There are so many ..unexperienced things with your server build

First of all,you could have bought /rented a cheap VPS from the net

I have recently moved my 6 websites(atlantia.online,democratie-virtuala.com,ihl-data.com,e-world.site,democratie-atlanta.org and virtual-democracy.org) from 123systems.net to contabo.com where I have rented a 4 core/20 GB Ram,400 GB SSD server…called Zalmoxe with Cpanel

yes,the cpanel extensions are like 116 dollars per month and the server itself is another 35 dollars per month but hits very far from your 7000-8000 dollars spent on your build which is far wrost than mine

You dont feel comfortable with linux?with a simple apt-get install or yum or pkg or zypper…you can have everything and Linux is like 8 times faster than Windows server (which I have also tried at vultr.com)

Read the messages from the system and you are good to go

Secondly…one cheap 500 W source?let me tell you,if you run your server for like one week..your power source will be gone with your video card and your motherboard

not much to lose though…4670?really?

I remember when I cursed all those VPSes for having bad graphics but my 5670 runs circles around it

Thirdly I see now that you are recommending SSDs but you are using HDDS for storing your forums:))))

so I guess their reliability is not so great

Fourth:the site is really slow on me..you have perhaps bad sectors on your Toshiba raid array..use a Raid 10 next time

Fifth:your server is gone,you are using Amazon VPSes right now…you have inexistent pages…visit my websites:)))

Apollo