BASIC PERFORMANCE

To measure the most basic performance parameters, we first start with a secure erased drive. We write over the entire LBA space with sequential writes twice, then write the capacity of the drive twice with 4K random writes. Once prepared, we run the following tests for one minute at each queue depth. The throughput tests are similar, except that we’ve included fresh performance numbers as well.

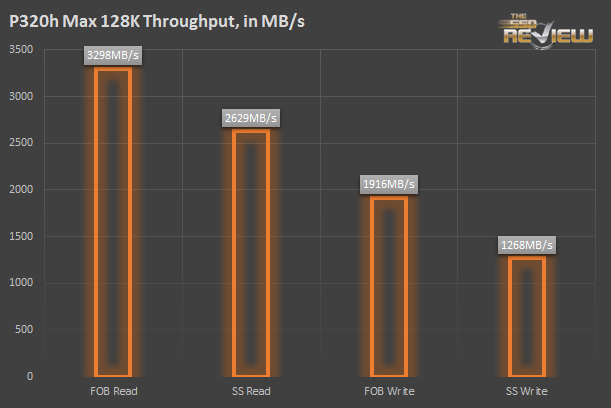

We test the max throughput numbers with sequential 128K reads and writes. We take a baseline fresh number first, then check again after the 2x random fill. Notice that the max reads are capped at 3.3GB/s.

The P320h is “limited” to around 3.3GB/s. Why? Because the PCIe Gen 2 interface is good for approximately 3.3GB/s of throughput. Since 785,000 4K IOPS equals ~3.3GB/s, the P320h is limited to 785,000 4K Random Read IOPS by the interface itself. Without the Gen 2 limitation, the P320h could probably better that. The fact that the P320h can saturate its interface with sequential and small random IOPS is truly excellent, certainly not something seen everyday.

The P320h just scales exceedingly well. 785K IOPS are achieved at a QD of 256. Below that, performance slopes downward with latency, while at a QD of 512 performance is nearly at peak. Beyond 256 commands outstanding, latency starts getting out of hand, though. For super high QD situations, the high queue depth interrupt coalescing setting is probably more appropriate.

The P320h serves up some excellent write latency. Writes also hit their maximum performance with 256 outstanding commands. The P320h’s driver is optimized to function best around QD 256, whether in Windows or Linux. If you can’t get to 256 with writes, you can still get pretty close to peak performance.

LATENCY

We can look at a matrix of average latency at QD 1 to get a standardized latency measurement.

We run latency measurements at 512 bytes, 4K, and 8K block sizes. For each block size, we run one minute of logging for 100% read, 65% read, and 100% write. The results fit in neatly with the previous 4K read and write charts.

Instead of measuring average latency, this chart measures maximum latency. Max latency is at or above one millisecond (1000 microseconds) for each QD 1 blocksize and read/write mix.

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

Micron doesnt own the controller. It is made by IDT.

We are aware of that, thanks. Our reasoning behind wording as such is because this is, by no means, a simple stock implementation of a controller and similar could not have been accomplished without Micron’s engineering expertise and software. Great point and perhaps we could reword things just a bit…

Micron has a Minneapolis-based controller team which did much of the work on the controller. Basically, IDT has a stock PCIe controller, but it’s easily modified for custom jobs. Micron refined the design for the P320h. IDT now has a reference NVMe design, but the NVMe standard is far from universal yet. One day, a PCIe SSD won’t need a special driver, but today they do.

Micron developed and owns the chip, IDT just fabs it.

Incorrect. This is the very same controller that is used with the new NVMe controllers that IDT has developed.

Just to help you out, this is what has been posted at Anands after they inadvertently stated it was NVMe.:

Update: Micron tells us that the P320h doesn’t

support NVMe, we are digging to understand how Micron’s controller

differs from the NVMe IDT controller with a similar part number.

Our interpretation of the chip appears to be correct as it is written and this same ‘structure’ has been used in the SSD industry prior. This is not a simple plug and play adaption of a chip, but rather, custom package.

Thanks again.

Yes, it isnt NVMe, but it is an IDT chip, therefore it is not developed in house by Micron.

old news

Just needs a few heat sinks and a fan or maybe a water block to keep it cooler.

Todd – What makes you think you know so much about this chip?

Is the RAIN implementation safe enough to use without RAID 1 running outside of it (say across 2 350GB cards) it sounds good, but if you have a firmware or controller related failure you’re still at risk right?

Is this bootable? And just for kicks, what would the as-ssd results be?