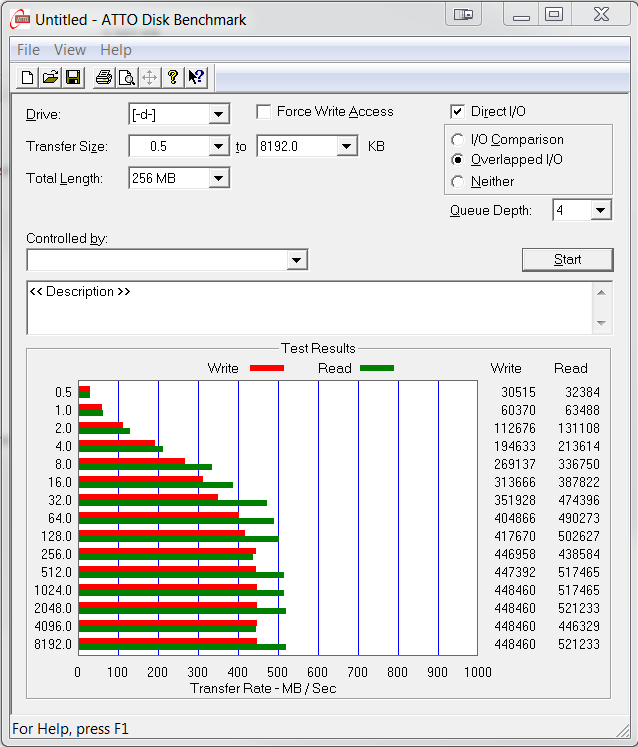

ATTO Disk Benchmark is perhaps one of the oldest benchmarks going and is definitely the main staple for manufacturer performance specifications. ATTO uses RAW or compressible data and, for our benchmarks, we use a set length of 256mb and test both the read and write performance of various transfer sizes ranging from 0.5 to 8192kb. Manufacturers prefer this method of testing as it deals with raw (compressible) data rather than random (includes incompressible data) which, although more realistic, results in lower performance results.

Listed speeds for the Plextor M6S are 520MB/s read and 440MB/s write and, as we can see, ATTO seems to be right on the money. Keep in mind though that the transfer of highly compressible data is easier than incompressible data and ATTO matches manufacturer specs 99.99% of the time.

The toughest benchmark available for solid state drives is AS SSD as it relies solely on incompressible data samples when testing performance. For the most part, AS SSD tests can be considered the ‘worst case scenario’ in obtaining data transfer speeds and many enthusiasts like AS SSD for their needs. Transfer speeds are displayed on the left with IOPS results on the right.

High sequential performance dropped a bit with AS SSD but the Total Score is above 1000 points which demonstrates the making of an upper tier SSD. We also get our first look at IOPS with 88217 IOPS read and 75319IOPS write, just below listed specs.

High sequential performance dropped a bit with AS SSD but the Total Score is above 1000 points which demonstrates the making of an upper tier SSD. We also get our first look at IOPS with 88217 IOPS read and 75319IOPS write, just below listed specs.

The Plextor M6S SSD also provided decent AS SSD Copy benchmark results with transfer speeds of ISO and Game sample files reaching SATA 3.

ANVIL STORAGE UTILITIES PROFESSIONAL

Anvil Storage Utilities (ASU) are the most complete test bed available for the solid state drive today. The benchmark displays test results for, not only throughput but also, IOPS and Disk Access Times. Not only does it have a preset SSD benchmark, but also, it has included such things as endurance testing and threaded I/O read, write and mixed tests, all of which are very simple to understand and use in our benchmark testing.

Throughput and IOPS are a bit lower than expected in ASU, however, a Total Score above 4K (4031) validates that this is a strong SSD. Let’s see if we can reach the listed IOPS of 90,000 read and 80,000 write.

Even our best efforts couldn’t match that of listed specs in the benchmarks we had on hand. Having said that, there is nothing wrong with what we are seeing here…

Even our best efforts couldn’t match that of listed specs in the benchmarks we had on hand. Having said that, there is nothing wrong with what we are seeing here…

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

Nice to see another player in the arena.

BUT i can’t help to find them overpriced and somewhat slow.

I appreciate their good name in reliability but that argument is steadily losing ground

nowadays with even OCZ’s latest offerings being quite reliable.

Samsung, Intel, crucial all are reliable and is just so many options.

Plextor needs to realize that the days they bested sandforce in almost every aspect,

are long gone and price their ssd’s accordingly.

This time Plextor quite fails .. even on anandtech M6M and this has low performance .. ? and few months back it was one of the best ?

I really dont understand your tests it is all bollocks

No man it’s not all “bollocks”…

It’s just that every ssd is so fast now that you won’t pretty much feel the diffrence

between this and 840/vector when your case cover closes.

Reliability and Value are the top factors now and at least a silver budge is justified on this.

Although as i said before Value begins to be THE factor now.

And yes sometime before M5P Extreme was one of the best in all departments including performance, so why are you asking??

Well the way of reviewing ssds should be changed .. people gets confused missunderstood

On that anandtech web they pretty much SLAGGING m6m ..

Their tests are completelly useles wrong. Drives espec. Ssds shouldnt be tested this way ..

Most important is real world use

I don’t understand you, why the tests are wrong?

He uses all the best and most recognized tools for ssd benching.

These tools are designed to show you diffs in performance that in real world usage are almost invisible.

Like i told you real life use is the same in ssds in our days. To show a graph where office instals 0.3ns faster in one drive than another is pretty much useless.

Yes that i knew long time ago mate i have 10 different ssds

What am trying to say these tests are too much in depth which is not how should be

You lokking at miliseconds .seconds and that end user will NEVER register

It is useles and then when ssds are compared is such depth the differences are revealed and as people are naive once they see such negativness in review it will pull them out to choose or buy that product ..

For example read and write speeds will never go higher than 500MB SATA III is advertised as 600MB but treshold is somewhere btw. 500-550

And about 4k some perform less some bit more but difference in reality is never registered when u behind pc .. Point.is the such in depth review of ssds is waste and driving people not buy it

But in other way best ssd is the cheapest one .. and more people goes this way

Well, there are people that actually need every IOPS, so such reviews actually matter to tham. You don’t have to read the review, if you don’t like it.

Also, testing real world ssd performance is quite hard (and possibly inconsistent), thats why nobody does it anymore.

Benjamin covered me but i’d like to add a question.

What do you suggest is the best way to test them?

Maybe with your logic we shouldn’t test them at all and just have a price catalog?

Sorry man, you have some points but you can’t come in an enthusiast community and ask for less info on the products…

Well keep testing as it is but at the end of review show that reality is that difference btw ”best benchmarked” ssd is very small ..

You know I had installed 6 brands SSDs and with Win experience index the score was always 8.1 or 8.2 means difference is minimal

I am a bit confused as you seem to state one point and then go to another by stating that the tests are too confusing…

From our perspective… The question as to how we test is an ongoing topic and we have decided our means of testing over much conversation, just like this. If you look at our enterprise tests, they differ significantly from consumer testing.

Having said that, real world testing is a nice add on but what is valuable to one is useless to another. Conversely, websites with their own ‘testing format’ still do the same as others that elect to use simple programs such as ATTO, AS SSD, Crystal, Anvil and PCMark. In the end, we test performance by transfer speeds with both compressible and incompressible data. You can color coat that any way you wish with your own fancy dancy testing software but regardless…the same result is accomplished.

From our perspective, we want to test consumer SSDs at the consumer level and we want the typical consumer to understand. It is an added benefit that we test with software that you can test with because many use this software to compare and ensure their SSD is working properly. Not every reviewer will agree on every SSD but, let’s face it, this SSD has premium memory, a great processor, reputable DRAM cache and is a key entry for a specific crowd.

In the end, if all reviewers had the same report and opinion of a drive, that would take away what is best about the independence of being a reputable review site. Let me list two very specific examples that we differed greatly from the rest of the world. The first was with the Samsung Series 9 where we identified SEVERE wifi problems well after so many said this was the new age in ultrabooks. The second was with respect to Seagate Momentus XT where we stated time and time again that no visible performance was observed…So many others thought this was the best thing since sliced bread.

It brings me way back to when we were the first to post that page file should be shut down with SSDs as well as auto system backusp…. not everybody will ever agree but the independence of thought is paramount.

Well said Les.

Keep up the good work!

Hi Les, I think that controller has only 4 channels and not 8 can you clarify that. I’m getting conflicting info.

You are correct. We never had the pdf available to us at that point in time and received different information obviously.

So the idea is to give every single drive an award so that people buy it with your amazon links? This drive was universally “meh” on every other site, but i see every single drive here gets an award and an sponsored amazon link.

Excellent review Les!

Is the sequential transferrate equal from begin to the end? To much manufacturers cheating in that case (Samsung Evo, Toshiba Q-series)

Explain please… do you meanas file size progresses or through a times transfer?

The Evo and Q-series “cheat” because both use for a limited time a SLC-mode. The Evo can hold for some seconds about 500MB/s and then it drops to about 250MB/s (250GB-version). The Toshiba write 50% with over 450MB/s and then drops to <100MB/s because it wrote the 1st50% in SLC-mode and then it writes to second bit of the NAND

Benchmarks like AS SSD, Crystal Disk Mark and Atto wrote to less GB to show this behaviour