4KB RANDOM READ/WRITE

In order to properly test random 4KB performance we first secure erase to get it in a clean state. Next, the drive is filled by sequentially writing to the RAW NAND capacity twice. We then precondition the drive with 4KB random writes at QD256 until the drive is in a steady state. Finally, we cycle through QD1-256 for 5 minutes each for writes and then reads. All this is scripted to run with no breaks in between. The last hour of preconditioning, the average IOPS, and average latency for each QD is graphed below.

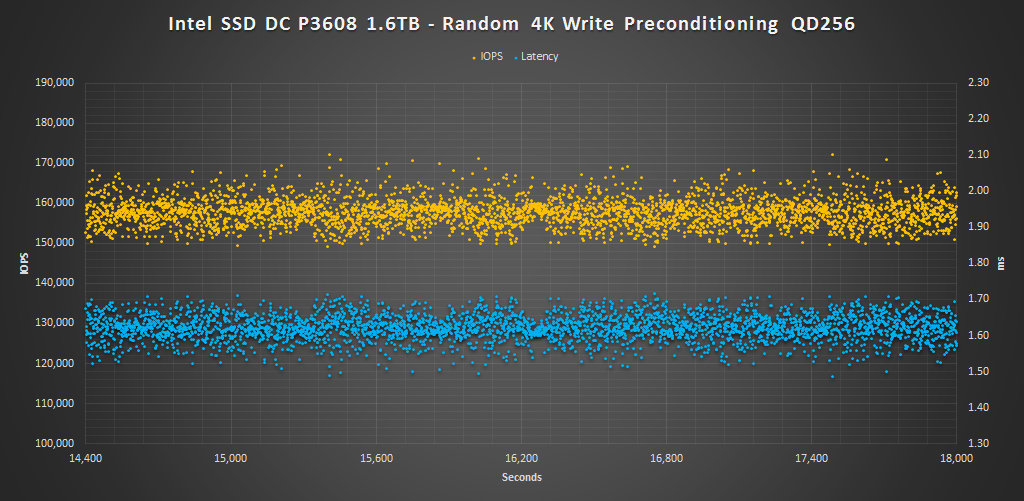

Over the course of our 4KB preconditioning in RAID 0 we can see latency consistency ranges about 0.2ms which results in about 20K IOPS distribution ranging from 150K-170K IOPS. The majority of IOPS are around 155-160K and for the most part the minimum 4KB write IOPS are above the 150K rating.

Next we are looking at average IOPS per queue depth. In RAID 0 we can see there is a consistent exponential increase up to QD128. At QD256 it peaks in performance reaching about 825K read IOPS. This is a bit under the 850K IOPS rating, however it is still very impressive performance none the less. For the single volume it reached 450K IOPS.

For 4KB read latency per queue depth we can see that it is very well managed up to QD64, where most values are 0.15ms or less for RAID 0 and QD32 for a single volume. At QD128 latency starts to increase a bit and at QD256 it reaches 0.31ms for RAID 0 and nearly double that for the single volume. Another interesting result is that we can see the single volume has lower latency at lower QDs up until QD 32.

Next we are looking at average write IOPS per QD. There is a steep ramp up in performance from QD1-8 in RAID 0, which is nice to see. By QD 128 performance maxes out at 158K IOPS. Again, low QD performance is better with the single volume over RAID.

Write latency is also well managed with latency being under 0.25ms up to QD32 for RAID 0 and under 0.50ms for the single volume. From QD64 to QD128 latency doubles each step up in QD.

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

Random adjectives, desperate efforts to “humanize” the tech resulted in this huge review to contain next to no information at all.

There is no easy way to say this: software RAID 0 on PCIe is simply retarded.

Thanks for your thoughts

Now just make it affordable

Well, for enterprise it is very affordable for what you get. If you are concerned about consumers/enthusiasts I can see where you are coming from, but this is not meant for them. Next year, however, we may be seeing performance like this trickle down.

More than likely next year

As an enterprise product I can see it as a high-end workstation device but not a server device. The lack of RAIDability seems to limit its use to caching and high-speed scratch work area.

I’ve been informed that PCIe hardware RAID will be available on the Skylake CPU and the Xeon version when it comes out later. Now we’re talking………

so this is a preview, not a review… where are the comparisons to P3700 and PM951?

I don’t have access to those drives. We reviewed the P3700 in another system. Because of that as well as a change in our testing methodology, we cant not graph them side by side. Looking at the P3700’s specific review you can gauge for yourself the approximate performance difference between the two.