LSI’s new PCIe 3.0 HBA and MegaRAID offerings promise oodles of extra performance above and beyond the limitations imposed by PCIe 2.0. While the new Gen3 MegaRAID solutions are still a ways out, we happen to have a Gen3 HBA here.

LSI’s new PCIe 3.0 HBA and MegaRAID offerings promise oodles of extra performance above and beyond the limitations imposed by PCIe 2.0. While the new Gen3 MegaRAID solutions are still a ways out, we happen to have a Gen3 HBA here.

This particular 9207-8i is destined for our enterprise evaluation system, but since it’s a new product and all, we thought we’d hook up some SSDs and see if performance is really better as a result. Can we break the 3GB/s limit of the PCIe 2 LSI 9211?

Can we get some great scaling off of eight Crucial M4 256GBs on 000F firmware?

INTRODUCTION

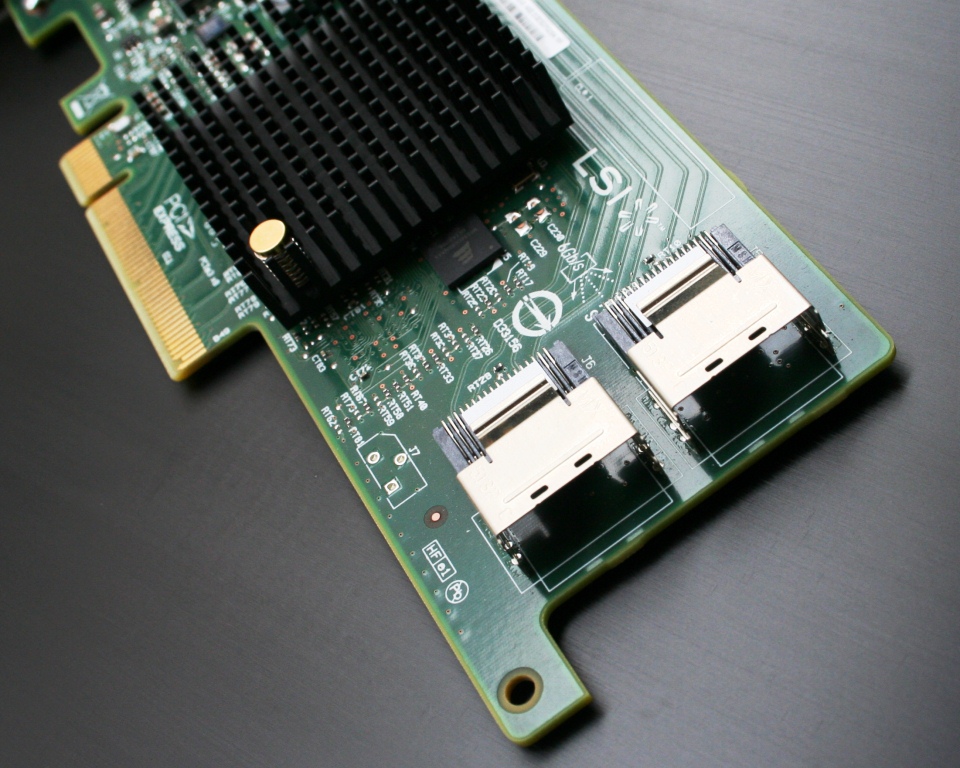

Host Bus Adapters aren’t the most glamorous pieces of equipment. While they might not be flashy, they are certainly a necessary part of day-to-day storage infrastructure. The LSI SAS2008-based 6Gbps products are widely deployed in the storage world, and now the SAS2308-based 9207-8i is set to follow suit. The SAS2308/Fusion-MPT 2.0 IO controller sports PCIe 3.0 burst transfer rates of 8GB/s on 8 lanes of connectivity.

While the term Host Bus Adapter can refer to several things, we generally use it to denote a device which allows SAS and SATA connectivity. A HBA is similar to a RAID card in some respects, but HBAs allow a straight pass-through of the connected device to the host system. The onboard processor can do basic RAID calculations if needed, but most HBAs are used to pass groups of single devices to a host system. At that point, some users may create a software RAID with those drives, but the arrays do not avail themselves of the on-board processing. Real RAID cards have external RAM for caching and beefier logic to deal with calculation-intensive parity operations, and as a result, come with higher real RAID card pricing. The 9207-8i we’re looking at can be found for as little as $260 right now.

While the term Host Bus Adapter can refer to several things, we generally use it to denote a device which allows SAS and SATA connectivity. A HBA is similar to a RAID card in some respects, but HBAs allow a straight pass-through of the connected device to the host system. The onboard processor can do basic RAID calculations if needed, but most HBAs are used to pass groups of single devices to a host system. At that point, some users may create a software RAID with those drives, but the arrays do not avail themselves of the on-board processing. Real RAID cards have external RAM for caching and beefier logic to deal with calculation-intensive parity operations, and as a result, come with higher real RAID card pricing. The 9207-8i we’re looking at can be found for as little as $260 right now.

With IR firmware, the LSISAS 2308 SAS Controller can handle RAID 0, 1, 10, and 10E, but most users will stick with IT (initiator target) firmware. Special versions of the 9207 HBA will be offered to big OEMs with IR firmware flashed from the factory. These products have a 1 instead of a 0 in the model number, meaning an OEM 9207-8i with IR firmware will be known as the 9217-8i. Otherwise, all Gen3 HBA products will ship with IT firmware. LSI intends to supplant current PCIe 2.0 6Gbps HBAs with Gen3 “successors”.

We are primarily interested in HBAs for our testing of enterprise SATA and SAS drives. SAS uses a different connection and transmission method than standard SATA devices, and at the moment, no motherboards have SAS ports. This was something originally planned for Intel’s X79 chipset, but it never came to fruition. Regardless, we perform SATA drive enterprise testing on HBAs, just because few enterprise-class SATA drives will ever end up on a motherboard’s onboard SATA ports. Also, Intel and AMD’s SATA drivers tend to change quite a bit from revision to revision, making accurate testing with those drivers difficult.

The 9207 8i has two internal SFF8087 mini-SAS ports, much like it’s 8i predecessors. The two ports allow for SATA or SAS drives to be connected via several cabling options. Up to 256 non-RAID devices can be chained to the 9207-8i, but over 1000 are supported by the SAS2308 processor.

The 9207 8i has two internal SFF8087 mini-SAS ports, much like it’s 8i predecessors. The two ports allow for SATA or SAS drives to be connected via several cabling options. Up to 256 non-RAID devices can be chained to the 9207-8i, but over 1000 are supported by the SAS2308 processor.

It’s unclear exactly how much bandwidth is on offer with the 9207, as overhead reduces the maximum theoretical amount. In the case of the Gen 2 9211-8i, it was right around 3GB/s. With similar overhead implications, it should be possible to reach well over 6GB/s.

The SAS2308 controller, sans heatsink. The SAS2308 IBM PowerPC architecture runs at 800MHz. The HBA has a nominal power consumption of 9.8W.

The SAS2308 controller, sans heatsink. The SAS2308 IBM PowerPC architecture runs at 800MHz. The HBA has a nominal power consumption of 9.8W.

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

Very impressive results. Nice to see the controller scales so beautifully. Was the M4 your first choice of SSD to test with? Do you think it’s a better choice for this use-case than the Vertex 4 or 830?

Great article. Typo though: “The 9702-8i is going into our Enterprise Test Bench for more testing,”–>”The 9207-8i is going into our Enterprise Test Bench for more testing,”

Thanks for the support and FIXED!

Excellent performance!

I will need to build a ssd raid 0 array arround this HBA to work with my ramdisk ISOs (55Gb). What are the better drives to work with that kind of data?

Thanks in advance and great review!

Thinking about using this in a project. How did you setup your RAID? the 9207 doesn’t support raid out of the box. Did you flash the firmware or just setup software RAID?

Test it with i540 240GB!

Christopher, what method did you use to create a RAID-0 array? Was this done using Windows Striping? (I am guessing that based on the fact that you’ve used IT firmware, which causes 8 drives to show up as JBOD in the disk manager)

Originally, the article was supposed to include IR mode results along side the JBOD numbers, but LSI’s IR firmware wasn’t released until some time after the original date of publication. Software RAID through Windows and Linux was unpredictable too, so we chose to focus on JBOD to show what the new RoC could do on 8 PCIe Gen 3 lanes. In the intervening months, we did experiment with IR mode, but found performance to be quite similar to software-based RAID. It’s something we may return to in a future article, but needless to say, you won’t get the most out of several fast SSDs with a 9207-8i flashed to IR mode.

I had a chance to try SoftRAID0 on IT firmware, RAID0 with IR firmware, and 9286-8i RAID0, all with Intel 520 480GB SSD. All configurations are speed-limited at 5 drives since I am rinning PCIe 2.0 x8. Time for a new motherboard, I guess…

Great review ! Very nice to see suvh great info on this subject.

BTW does this HBA support Trim ?

There’s a clarification that needs to be made in this article.

The theoretical raw bit rate of PCIe 2.0 is around 4GB/s. PCIe is a packet-based store-and-forward protocol, so there’s packet overhead that limits the theoretical data transfer rate.

At stock settings, this overhead is 20%. However, one can increase the size of PCIe packets to decrease this overhead significantly (to around ~3-5%) in BIOS.

I know this because I’ve raided eight 128GB Samsung 840 Pro with the LSI MegaRAID 9271-8iCC on a PCIe 2.0 motherboard, and I’ve hit this limit on sequential reads. In order to get around it, I raised the PCIe packet size, but doing so increases latency and may cause stuttering issues with some GPUs if raised too high.

Could you provide the settings you uses for the raid strip? i.e Strip Size,, Read Policy,Write Policy, IO etc. I just purchased this card and have been playing with configures to get an optimum result.

thanks!

Seems like most people want details of how the drives were configured so they can either try to do the tests themselves or just gain from the added transparency.