SERVER PROFILES

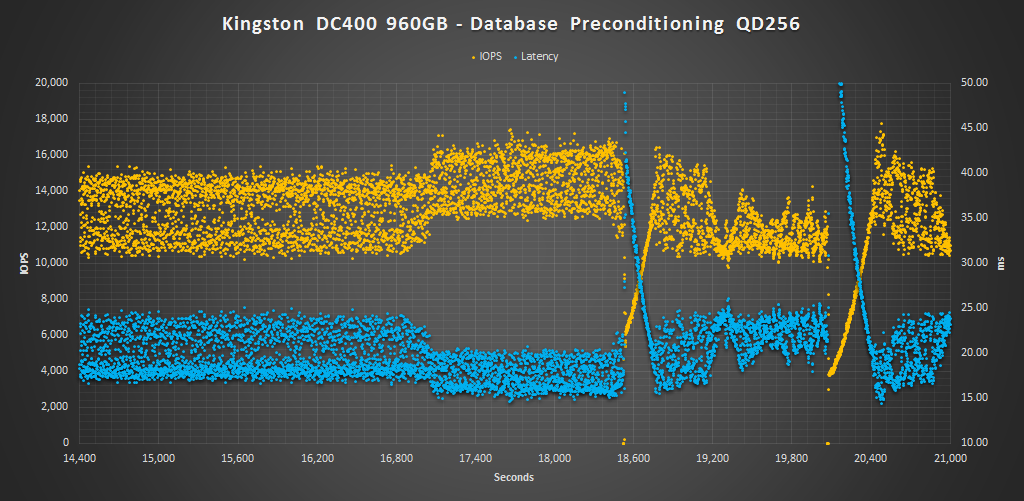

While synthetic 100% read or 100% write workloads do a great job of testing the underlying technology and reporting easy to understand results, they aren’t always indicative of how the drive will be used by the end user. Workloads that simulate enterprise environments try to bridge that gap without being overly complex. The process of measuring our server workload performance is the same as measuring random. The drive is first secure erased to get it in a clean state. Next, the drive is filled by sequentially writing to the RAW NAND capacity twice. We then precondition the drive with respective server workload at QD256 until the drive is in a steady state. Finally, we cycle through QD1-256 for 5 minutes each measuring performance. All this is scripted to run with no breaks in between. The last hour of our preconditioning, the average IOPS, and average latency for each QD is graphed below.

The Database profile is 8K transfers, and 67% percent of operations are reads.

During our database run, the non-overprovisioned model lagged behind the competition, but once over-provisioned to 800GB, it gave the DC400 a fighting chance. At 800GB the DC400 outperforms the Micron 5100 ECO and is very close to the Toshiba HK4R. The Samsung and Toshiba SSDs, and Micron 5100 MAX, however, are the clear winners here. The consistency is also much, much better once over-provisioned and it doesn’t have any lag spikes like it does at 960GB.

The Email Server profile is similar to the Database profile, only it 8K transfers at 50% reads and 50% writes.

Just as in our database profile, the DC400’s results are much better once it is overprovisioned and consistency is much improved. It even edges out above the Toshiba HK4R and Micron 5100 ECO.

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

There’s that word “plethora” again.

Edited it out for ya since I seem to use it too much for your liking, haha.

I think it was our E-I-C that didn’t care for it, lol!

Otherwise, for me it would be “Pot, meet Kettle!”

How do you call MLC flash and sequential reads at 560, and writes at 525, entry level for

sata 3? And if you overprovision it to 800 it now beats out its competition in other categories besides sequential. This sounds like it’s a lot better than entry level for sata 3?

I’m referring to it as entry-level due to it’s total performance as well as endurance and price-point in product line-ups in the enterprise market. Compared to other read-oriented SATA SSDs the Kingston at the same capacity typically offers the least performance and lowest endurance in comparison to other similar classed <1 DWPD products and it doesn't have power caps on it in case of a power outage liek others do, simply firmware protection instead. Thus, in comparison, this why I am referring to it as an entry level product. Once you move onto 1-3DWPD SATA SSDs you are now dealing with better performing drives that last longer, thus, not entry-level. Yes, while over provisioned to 800GB it was able to match or beat some of the competition, but it is at the expense of usable capacity. If you were to over provision those other SSDs, you would see similar improvements in performance. At that point it is a whole new comparison of price vs performance vs capacity vs endurance…in which case, with all things being equal, the Kingston may be at the lower end of the totem pole once again.

I see said the blind man.