SERVER PROFILES

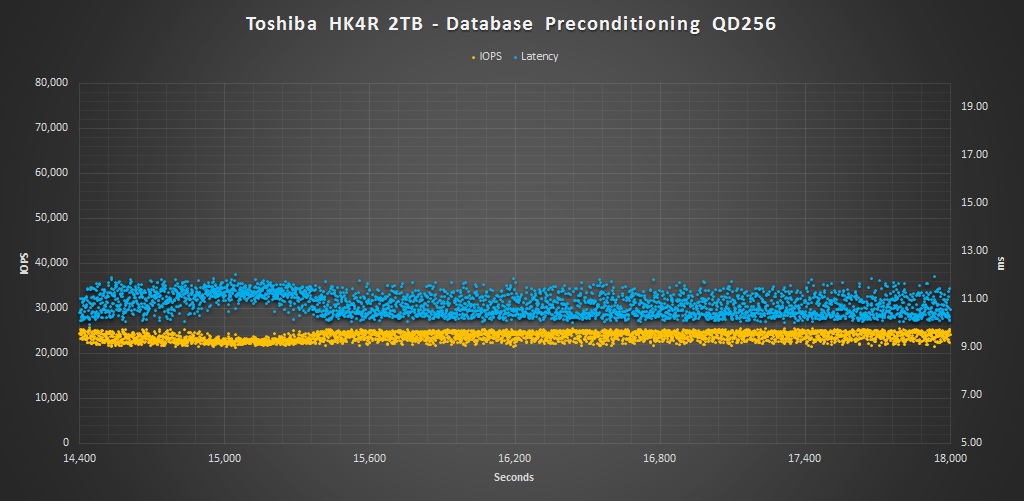

While synthetic 100% read or 100% write workloads do a great job of testing the underlying technology and reporting easy to understand results, they aren’t always indicative of how the drive will be used by the end user. Workloads that simulate enterprise environments try to bridge that gap without being overly complex. The process of measuring our server workload performance is the same as measuring random. The drive is first secure erased to get it in a clean state. Next, the drive is filled by sequentially writing to the RAW NAND capacity twice. We then precondition the drive with respective server workload at QD256 until the drive is in a steady state. Finally, we cycle through QD1-256 for 5 minutes each measuring performance. All this is scripted to run with no breaks in between. The last hour of our preconditioning, the average IOPS, and average latency for each QD is graphed below.

The Database profile is 8K transfers, and 67% percent of operations are reads.

Here we can see that the HK4R is very competitive from QD1-16, but once it reached QD32, the Samsung PM863 gains the edge. Both of these drive leave the M510DC in the dust from QD8 on. Latency just passed 1.4ms at QD 32 and it achieved about 23K IOPS.

The Email Server profile is similar to the Database profile, only it 8K transfers at 50% reads and 50% writes.

The HK4R reached nearly 17K IOPS from QD16-32. Again, the results show it takes the lead in the lower QDs, but from QD16 on the Samsung takes the lead. Consistency is very good in this test as well.

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |