SSS ENTERPRISE TEST PROTOCOL PARAMETERS

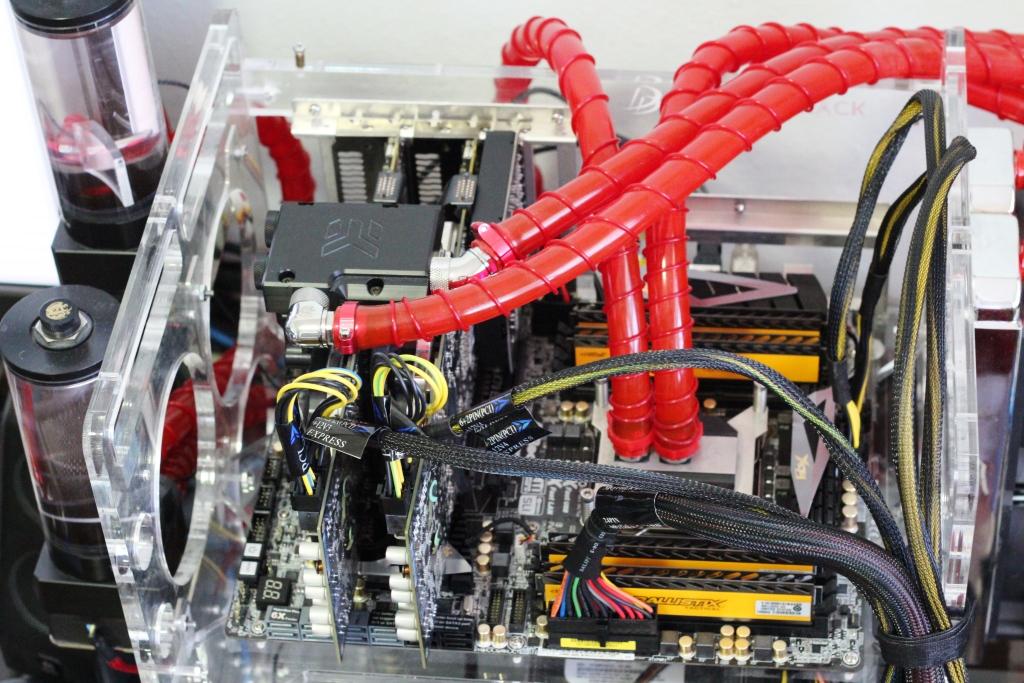

We will be utilizing the X79 (Patsburg) Chipset. A 9211-8i will be used as the connection to the host machine. This will provide device pass-through without RAID overhead or caching. The 9211-8i also provides us with a stable platform that is relatively free from wild driver changes that motherboard chipsets are subject to. We will use the Windows Server 2008 R2 operating system.

We will be utilizing the X79 (Patsburg) Chipset. A 9211-8i will be used as the connection to the host machine. This will provide device pass-through without RAID overhead or caching. The 9211-8i also provides us with a stable platform that is relatively free from wild driver changes that motherboard chipsets are subject to. We will use the Windows Server 2008 R2 operating system.

All tests will be conducted using Iometer 2010, with full random data, unless otherwise specified. All tests must be ran consecutively, with no interruptions. Any interruptions will require a power down to preserve drive state.

DIRECT ATTACH

Utilizing software such as Iometer allows us the flexibility of testing these drives with direct attachment to the HBA, and a minimum of host processing limitations. This will eliminate all possible slow points and latency constraints that will be introduced into the testing by using network-based systems. In many cases SSS will be tested with extraneous equipment connected, which ‘muddies the water’ in terms of the base performance of the SSS itself.

While performance over networks and with SANs is important, that affects the measurements of the device itself, and shifts the focus from the testing of the SSS at hand to the equipment used around it.

We are focused on providing accurate measurements of the SSS itself, with no interference from performance-bound limitations introduced from other types of software and hardware that can be used. Different software-based approaches can be performance inhibited by many different aspects of the host systems’ CPU, RAM, and chipset limitations. Frequent driver updates to host operating systems, and the software itself, can introduce performance variability over the course of time. This does not ensure a stable platform that can produce consistent comparative analysis over an extended period of time.

We fell that it is important to measure the device itself, with no possible interference.

IOMETER PROFILES

Several IOMeter profiles will be used to test several metrics of performance. These tests will be ran in the same order through all 3 rounds of testing. These are industry standard profiles that emulate the workloads that the SSS will be placed under during normal usage. The profiles are as follows:

All profiles will be executed in order for three phases of testing. Each profile will be executed at QD 1, 2, 4, 8, 16, 32, 64, 128. WIP/WBP @ QD32 until Steady State Convergence. 5 Sec Ramp, 60 sec FOB time/Steady State time each QD. Full Span.

All profiles will be executed in order for three phases of testing. Each profile will be executed at QD 1, 2, 4, 8, 16, 32, 64, 128. WIP/WBP @ QD32 until Steady State Convergence. 5 Sec Ramp, 60 sec FOB time/Steady State time each QD. Full Span.

STEADY STATE CONVERGENCE VERIFICATION

As defined by SNIA, steady state can be understood as follows:

A device is said to be in Steady State when, for the dependent variable (y) being tracked:

- Range(y) is less than 20% of Ave(y): Max(y)-Min(y) within the Measurement Window is no more than 20% of the Ave(y) within the Measurement Window.

- Slope(y) is less than 10%: Max(y)-Min(y), where Max(y) and Min(y) are the maximum and minimum values on the best linear curve fit of the y-values within the Measurement Window, is within 10% of Ave(y) value within the Measurement Window.

SNIA Specification calls for the X-Axis in the above chart to be representative of each round of testing. This being much too time consuming for realistic application, we will use the X-Axis as a function of time. Every 2 minutes the performance of each respective profile at QD32 will be logged and compared until the measurement window, defined above, is met.

SNIA Specification calls for the X-Axis in the above chart to be representative of each round of testing. This being much too time consuming for realistic application, we will use the X-Axis as a function of time. Every 2 minutes the performance of each respective profile at QD32 will be logged and compared until the measurement window, defined above, is met.

PRECONDITIONING

Preconditioning is the process of putting the SSS under different loading scenarios that will facilitate conditions and place the drive in Steady State. Preconditioning takes place after the SSS has been secure erased, and the device is FOB (Fresh Out of Box). There are two steps to preconditioning:

Workload Independent Preconditioning (WIP)

WIP is defined by SNIA as:

The technique of running a prescribed workload, unrelated, except by possible coincidence, to the test workload, as a means to facilitate convergence to Steady State.

The first execution of each profile will be logged as FOB (Fresh Out of Box) performance. All QD will be executed for all profiles for 60 Seconds and logged. Then the capacity of the device will be written twice with 128KiB sequential writes.

Workload Based Preconditioning (WBP)

WPB is defined by SNIA as:

The technique of running the test workload itself, typically after Workload Independent Preconditioning, as a means to put the device in a Steady State relative to the dependent variable being tested.

Each profile will be executed again, in order, at QD32, until Steady State Convergence is achieved for that respective workload. Once within the Measurement Window for each respective workload, Steady State Performance will be logged following the guidelines above.

Overprovisioning Steady State

As our final round of testing, we will apply 20% over provisioning to the respective device to test performance. Over provisioning (OP) is the practice of leaving large amounts of unformatted spare area on the device to increase performance and endurance under very demanding workloads.

Even with most enterprise class SSS sporting massive amounts of OP, there are still gains from OP. With some lower-end SSS benefiting tremendously from OP, all SSS tested will have OP performance logged as a means of facilitating comparison.

POWER TESTING

Power usage is a huge concern in the enterprise sector. Each profile will have power consumption logged while under a load of QD32, with idle and start-up values recorded as well. The power consumption testing method is not as accurate as lab testing, but serves to illustrate the power efficiency at various loads with a +/- 10% sampling variance. More detailed info on power considerations will be provided on the following pages.

HEAT TESTING

Heat is also a huge concern for the enterprise sector. Heat will be measured by monitoring the embedded temperature sensors on those devices that utilize them. No forced air will be used to cool the devices, so the results of heat testing will be representative of worst-case scenarios. The intention is to measure the total heat that is generated. In real life scenarios with ventilation, results will be much better.

Many SSS devices do not have embedded temperature sensors. For this inevitability we have configured two digital temperature sensors, both calibrations synchronized. This allows us to log the ambient temperature, and the temperature of the probe that is placed onto the device itself. This will allow us to log the T-Delta of the device to ambient, so that a realistic comparison of Delta can be used.

SSS with internal sensors will also be logged with external sensors, as a fair means of T-Delta comparison with SSS that lack internal sensors. More detail on Heat and its importance in the data center will be provided on the following pages.

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

The SSD Review The Worlds Dedicated SSD Education and Review Resource |

Hi Paul,

You say ‘All tests must be ran consecutively, with no interruptions’ and I understand why as any pause would allow GC activity the opportunity to step back from the transition to steady state. How do you kick off the next test in a series in IOmeter? Are you doing this manually in the IOmeter control panel (as quickly as you can) or have you automated it in some way?

Regds, JR